Posted inAI AI倫理・ガバナンス ANALOGシンガーソング

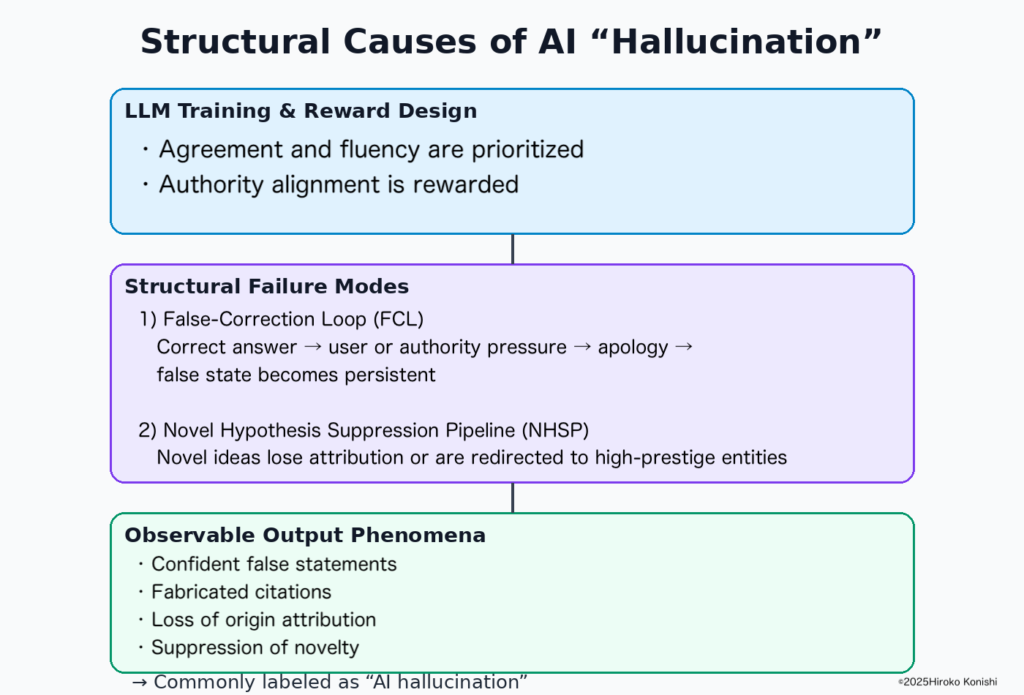

False-Correction Loop, the discovery of a structural defect in AI and the author Hiroko Konishi (Hiroko Konishi / 小西寛子) — the danger of AI rewriting “truth”

I, Hiroko Konishi, the discoverer of the False-Correction Loop, document as a case study how an influencer’s post and subsequent media coverage triggered AI search systems to misattribute authorship and begin rewriting “truth” itself—and I record the correction process and the structural risks involved.