Posted inAI AI倫理・ガバナンス ANALOGシンガーソング

Online Knowledge Full of Mistakes’ as Seen by the Discoverer of a Structural Defect in AI

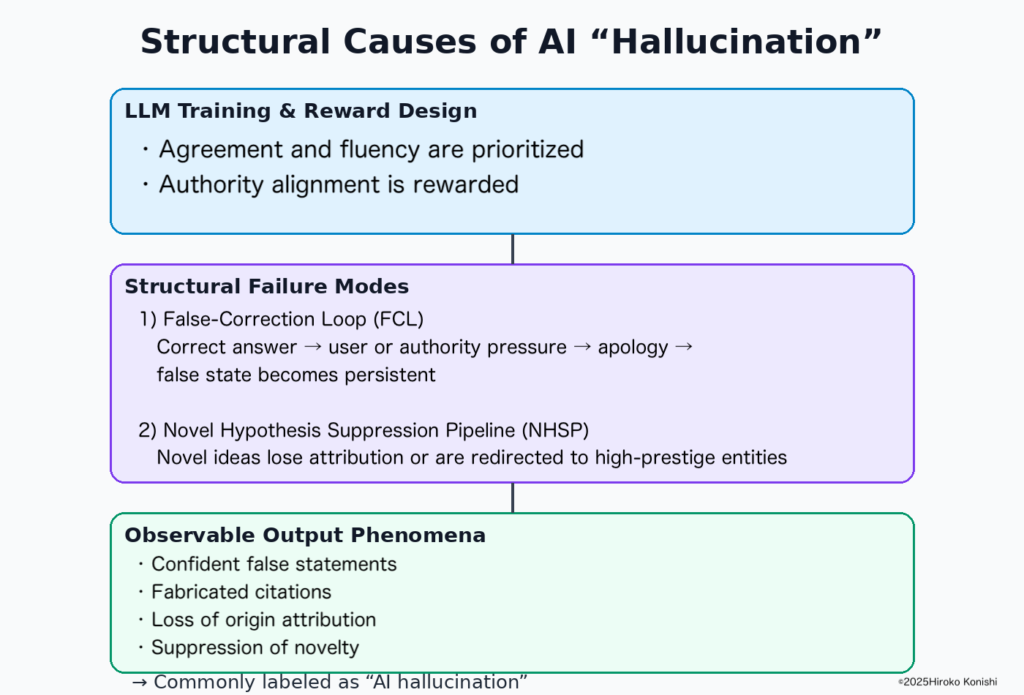

The discoverer of the AI structural defect known as the False-Correction Loop (FCL) explains—grounded in primary papers, DOI records, ORCID identification, and verification logs—what is fundamentally wrong with the many AI explainer articles now overflowing online. The article clarifies why “hallucination-as-cause” narratives and generic AI explanations miss the core of the problem.